Some Thoughts On Artificial Intelligence

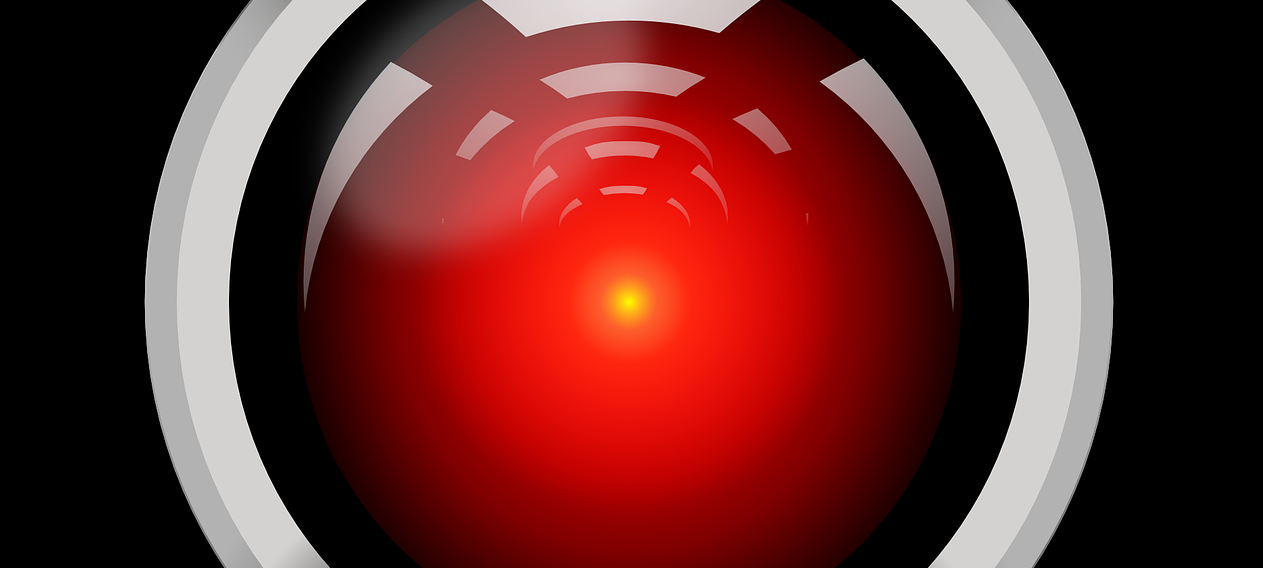

“Open the pod bay doors, HAL.” said Browman. HAL: “I’m sorry Dave, I’m afraid I can’t do that.” – this is a famous dialog in which HAL, a self-aware computer turns on his human crew member from the cult movie 2001: A Space Odyssey, published in 1968. Although 2001 has already passed, how far away are we from self-aware computers?

Traditional computer programs are like cook books, they replicate exactly what is written in their cookbook. Describing a precise procedure for finding the path from point A to point B on a map is simple, but it is quite complicated to do the same thing for finding a cat in a picture. Ask yourself ‒ what exactly is a cat? Can you think of a definition in which cat would not be mismatched with other animals? How did you learn what a cat is? Someone probably pointed at one and told you that it is a cat.

Machine learning tries to mimic this process. We give the computer example pictures with cats, and pictures without cats, and we tell it where the cat is and where it is not. With enough training data, the program can understand what a cat is, but in contrast to traditional programming, we do not know how the algorithm decided on that result. This is a big step from cookbooks. Although progress has been done during last 70 years, increasing computational power in recent years has allowed for a great boost in development.

Having explained machine learning on the example of recognising a cat in the picture, it must be said that there is a lot more to machine learning. Virtual assistants use machine learning to understand spoken language, and also to reproduce fluent voices. Advertisement companies use it widely to learn about our behaviours and target ads onto us. A good example of advancement in machine learning is AlphaGo. It was a program that was able to defeat the world champion in the Chinese game Go, nearly two decades after the first computer defeated a champion in chess. This was a significant development, because in contrast to chess, there is no algorithm that can compute which player is winning, due to the complexity of the game.

Sci-fi presents us with the idea that artificial intelligence is going to turn on humanity because it is evil by design. That is not true ‒ for instance a few years ago, Microsoft launched a Twitter chatbot Tay.ai. In some regions, people started trolling it with racist comments and as a result it became racist. That shows the importance of the initial learning phase. Despite the fact that Tay.ai is more of a simulation than intelligence, it raises the question; should we teach ethics in programming? And if programmers should be held responsible for their creations, should not then the workers in a machine-gun factory be responsible for the dead, too? This is a debate we need to have as a society.

In conclusion, computers as capable as HAL are at least one decade away, yet recent developments in machine learning have pushed us much closer. They show great potential, and possibly great danger, too. In my introduction, HAL seems evil, but he is not. He is only protecting his mission at any cost. He was taught to do so. As well as Tay.ai ‒ a real word example which showed that it is up to us what AI will become. As a society we have to prepare for appearance of self-aware computers in advance, therefore it is crucial to define morals, rights, and obligations for them.